On March 1, DeepSeek’s official Zhihu account published an article titled “Overview of DeepSeek-V3/R1 Reasoning System”, which fully revealed the key secrets behind the V3/R1 reasoning system.

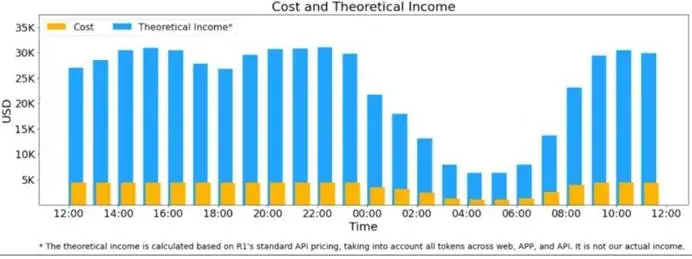

The article disclosed key information such as DeepSeek’s theoretical cost and profit margin for the first time. According to reports, assuming that the GPU rental cost is $2/hour, the total cost is $87,072/day; if all tokens are calculated according to the pricing of DeepSeek R1, the theoretical total income per day is $562,027/day, and the cost-profit margin is 545%. This figure refreshed the profit ceiling in the field of global AI large models.

Theoretically, DeepSeek makes a net profit of $474,955 (about 3.46 million RMB) per day.

However, DeepSeek officials admit that they don’t actually make that much money, because V3 is priced lower, and only a portion of the service is paid for, and there are discounts at night.

The article says: “The optimization goal of the DeepSeek-V3/R1 reasoning system is: greater throughput and lower latency.” To achieve these two goals, DeepSeek’s solution is to use large-scale cross-node expert parallelism (EP), but this solution also increases the complexity of the system. The main content of the article is about how to use EP to increase batch size, hide transmission time, and perform load balancing.

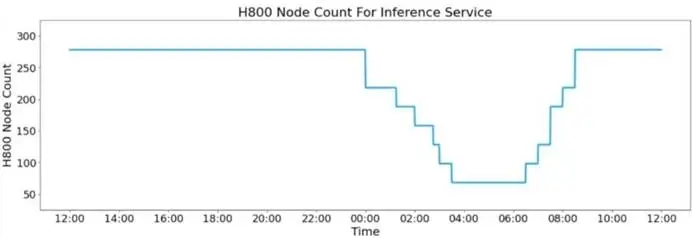

In addition, because the service load is high during the day and low at night, DeepSeek has implemented a mechanism to deploy reasoning services with all nodes when the load is high during the day. When the load is low at night, the reasoning nodes are reduced for research and training.

It is worth mentioning that DeepSeek Open Source Week has officially ended. Previously, at noon on February 21, DeepSeek announced that it would open source 5 code bases to share research progress with the global developer community in a completely transparent manner. New content will be unlocked every day to further share new progress. This plan is defined as “Open Source Week”.

Industry insiders believe that the importance of “DeepSeek Open Source Week” has surpassed the recent OpenAI conference. DeepSeek used “large quantities and full” open source to effectively counter the European and American claims that DeepSeek deliberately said that the training cost is low, and proved that there is a lot of room for optimization in the training link.